AI is now more intelligent than me

And you.

Let me start by saying this is 100% me, no AI, so expect typos.

I think it’s important to state this clearly nowadays given the way things are going, the way I’m going. The way I’ve gone. I’m writing this in an attempt to sort things out in my head, to get some clarity about what’s gone on since the start of the year. I’ve changed, that’s for sure. I’ve gone back on a lot of things I said not so long ago. I’ve made some people really happy, others I think I’ve just pissed off. I’ve spent more time thinking about who I am and what I stand for than is reasonable and honestly, I don’t like some of the conclusions. But hey, we’re all doing the best we can right now. There’s no playbook for this. We’re winging it.

The title of this blog is Paleolithic Principles (for a Digital World). My intention was to write about rural life, minimalism and digital disconnection yet I’ve done basically nothing but write about AI for almost a year. I came to preach analog and that hasn’t happened. And here I am again, about to launch into another essay mostly about AI. I think you’ll find this one more interesting though.

Let’s start by squeezing the whole story into one cynical paragraph.

I was born. I studied a lot. I started programming when I was 10. I spent almost 10 years building a huge software project. In 2023 ChatGPT arrived. It was cute and funny. It got better. By the end of 2024 it was coding well enough that non-developers were building half-decent apps. I hated it, swore I’d never use it and started planning my early retirement. My software company fizzled out. I ignored and/or hated on AI for the whole of 2025. Something big happened in AI at the end of 2025. I spent January 2026 completely immersed in AI, embraced it completely and now everyone (including myself) thinks I’m a sellout.

There’s a lot to unpack here.

2025

By all measures, I lost 2025 to denial. From the outside it might look like I decided to take a well earned rest. Truthfully, I really was exhausted from almost a decade of building mind-bendingly complex software. The ERP system we created was a genuine triumph of engineering but by early 2025 it was clear to all of us that it wouldn’t be commercially viable, so we wound down new development on the project. In parallel, the threat posed by AI to the SaaS industry was gradually starting to crystalise. To be clear, creating software was still hard at this point, but everyone could see what was happening and those of us who are middle-aged enough to have lived through multiple digital ‘revolutions’ (internet, smartphone, social media) have developed a pretty good nose for seeing where things are going early, and sensing just how quick it can happen. On the 2nd of January 2025 I published the essay SaaS is Dead. SaaS is the New SaaS in which I articulated the thought that was on everyone’s minds. It was at this point that I decided to turn my back on software, and to some extent on the entire digital-industrial complex. We even sold the house and moved to the country.

All I can say is that at that point, in early 2025, I fully and earnestly intended to jack it all in.

I’m not sure I can communicate how much hatred I had for AI for almost the whole of 2025. Firstly, it was kind of shit. Not shit enough to be unusable - just good enough to be convincing. I’ve written about this a number of times already. AI, to most people, was just ChatGPT, and whilst it was getting better at a staggering velocity, all I could see was bland hallucinations. I was watching the brains of people around me turn to mush in front of my eyes. Intelligent, questioning people parroting lines from ChatGPT, treating it as an all-knowing oracle. I was determined not to let that happen to me, so I approached AI in the exact same way I’ve approached social media (and that has worked very well for me) - total abstinence.

Secondly, I outright refused to use ‘vibe coding’ tools. I’d decided I was a ‘programmer’, a ‘craftsman’, and using those tools was degrading - like asking a master carpenter to switch to assembling IKEA furniture for a living. That’s honestly how I saw it, and I didn’t want anything to do with it. I fully intended to get completely out of the software game and do something else. I made no attempt to keep this to myself either, I told everyone. I wish I could just keep my mouth shut.

Thirdly, and most disturbingly, anyone even remotely connected to tech seemed to understand the gravity of the economic catastrophe that was (and IMHO still is) coming our way, yet no-one was doing or saying anything meaningful in the way of prevention or preparation. The odd essay on UBI, the odd call for regulation, the occasional half-arsed newspaper column. Humanity seemed to be sleepwalking into a socioeconomic tsunami of its own making and if nobody was interested in joining me in fighting back, I figured my only option was to hunker down in my little village and get chickens and guns.

In summary - I hated what AI had done to my ‘craft’, what it was doing to everyone around me, and what it was about to do the economy and social fabric of every developed country in the world.

2026

Here is a list of software I built in January 2026:

SiteStakk: a multi-tenant bulk site building platform

PWR ERP: a full custom cloud ERP system for my sister’s influencer marketing business

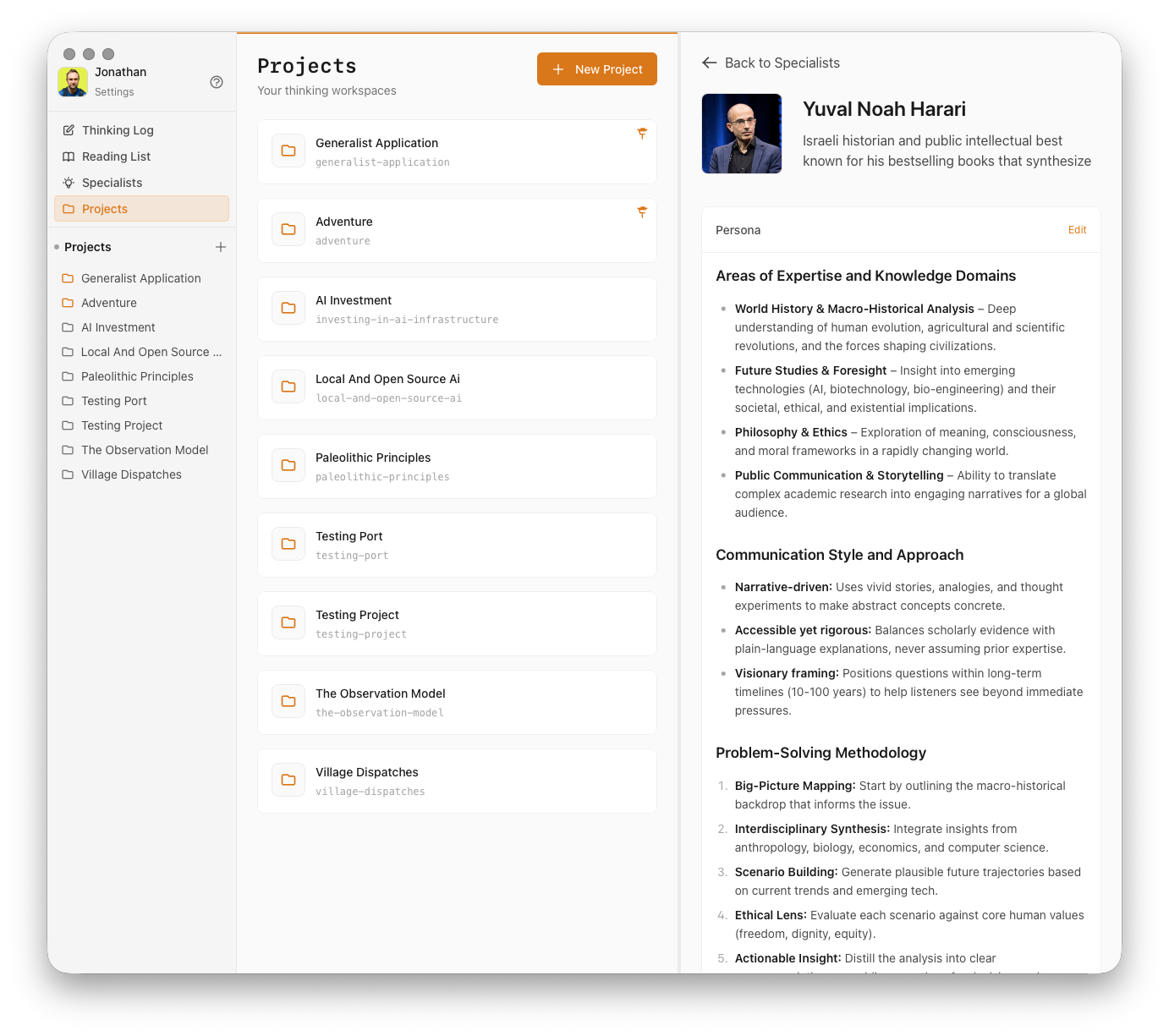

Generalist: a privacy-focused AI-assisted personal intelligence platform delivered as a cross-platform desktop app

RenderLab: a web app that uses AI to create photo-realistic renders of any angle in a house from simple 3D models

AI Training platform: an interactive, multi-user live training environment and full AI training course.

At a conservative estimate, I produced 24-36 months of pre-2026 work in a single month. I’ve also built two polished marketing websites, done a tonne of consulting, teaching and writing, played around with some awesome knowledge management techniques and done some really groundbreaking research on personal projects and started a second SubStack dedicated to the philosophy of AI as told by the agents themselves.

Without any shadow of a doubt, January 2026 has been the most ‘productive’ month of my life. And you know what? I’ve loved every minute of it. So clearly, something has changed. Did I sell out? Did I change my mind? Did I cave? Did I reconsider? Did I lie to everyone? Did I lie to myself? Did I repent? Do I still hate AI?

I’m still processing these questions and more. I don’t have a fully clear picture of exactly what happened, but I have some ideas and I’ll spend the rest of this essay discussing them.

I suspect some people who know me might be thinking that I was deluding myself all along, that I was always bound to embrace AI and stay in the digital world - and I suspect they might be right. It’s not easy tearing yourself away from an identity that has defined you your whole life. I’ve always been the computer guy. Perhaps that’s my destiny. Perhaps my dreams of spending the latter half of my life outdoors in contrast to the last 45 years that have been spent largely behind a desk are nothing but a delusion, or wishful thinking. I’d like to think not. I’d like to think there was, is, something in it. I’m being honest here - just saying what I’m thinking. I genuinely don’t want to be a keyboard warrior for the rest of my life. The adaptation process may take longer than I originally planned for.

Another take, and this one cuts a bit deeper, is that my change of direction is a moral failure. “Ah come on,” I can hear you saying, “no-one is thinking that”. But I am. Not with any certainty. I don’t KNOW that I’ve been untrue to myself, bit I do suspect it. You see I really am ‘against’ AI in so many ways. If you gave me a choice right now, AI or no AI, I’d choose ‘no AI’ in a heartbeat. I still believe that AI is a massive net negative for humanity. In fact, I don’t think I’ve ever been as sure of anything in my life. I don’t believe in these ‘abundance for everyone’ narratives that the big AI CEOs are spinning. I think humanity’s house will burn because of AI - and this is me fanning the flames. If that’s not a moral failure, then what is? Anyway, you be the judge. I’m still on the fence.

So what the hell happened?

I’ve been thinking all week about how to express this. I could, and will, tell you about new models, new techniques and new software. I could , and will, talk to you about the ‘January 2026’ effect that has everyone in the AI community hyped to the tits. But here’s the absolute clearest statement I can come up with:

AI is now more intelligent than me.

This has happened in the last month. And it’s irreversible.

If you think I’m exaggerating, go and ask anyone who’s living this revolution in first person. No-one’s going to disagree.

Let that sink in for a minute. Just stop and take in the pure magnitude of that statement. Last month I was proud and protective of my intellect. It was a rare gift, an asset, a weapon. Now, it is meaningless.

Don’t get me wrong, I’d still rather be intelligent than dumb. But intelligence, intelligence far greater than mine, is now a commodity that can be had for £20 a month. Being intelligent is now a ‘nice-to-have’, like being able to sing or draw.

I’d like to dig into this idea of intelligence with reference to the developments of the last month or so, so first let me briefly summarise what has happened in case you haven’t been following. Anthropic, a competitor to OpenAI, basically hit the ball out the park with two products.

Firstly, their new frontier model Opus 4.5 (now superseded by Opus 4.6 - yes, that’s how fast things are moving) broke a utility threshold. Yes, it beat other models on all sorts of boring benchmarks, but there was a general feeling that this was a step-change in ability. The model itself was only an incremental improvement over others in its class, nothing revolutionary, but it was now just good enough that you could reliably and effectively DO stuff with it. Not just code, although it was truly excellent at coding. It was good across the board, it could think for minutes at a time and come up with genuinely useful insights, it could command tool use with incredible dexterity.

Secondly, the explosion in popularity of Claude Code and the subsequent release of CoWork in just 10 days was just enough of an improvement in ‘agentic AI patterns’ that, when taken in combination with the new model, you could now do really interesting work, fast, reliably and without too much frustration.

Again, taken out of context, these were just more unremarkable incremental improvements in a continuous chain stretching back to the release of ChatGPT in 2023. Except they weren’t, and everyone knew they weren’t. Something had changed.

The first time I used Claude Code I sat there with my mouth open and knew right there and then that I’d never write a line of code again in my life. I haven’t looked at a single line all month in fact. Why would I? Claude is much, much better at coding than I ever was. Right now he still makes the odd architectural error and I’m not going to suggest that it’s not an advantage to have 10 years of software engineering under your belt — but its a fast-closing window. The same goes for all the other arguments I hear for the enduring role of ‘humans in the loop’. Taste. Design. Creativity. Ideas. I can only think that people who make these arguments have never used Claude. Don’t have any ideas for software? Try opening Claude and writing “Give me 5 ideas for a truly revolutionary piece of software”. You will be astounded. Design? Try saying “Claude, use your /frontend-design skill to make this website look amazing”. Better than any designer I’ve ever hired. Ideas? Ask it to take some random thoughts you have and come up with something wildly creative. I’m sorry. I’m truly sorry to be writing this. I wish wish wish it wasn’t true. But it is. Claude is better than me, and better than you, at everything. Full stop. The only people that haven’t realised this are people who have never tried or aren’t yet ‘good at AI’. I’m going to come straight out and say it: being ‘good at AI’ is, right now, the only relevant skill you can acquire.

And guess what? I’m good at AI. I’m very fucking good at AI.

Why? How? Hard to say. Maybe my technical background. Maybe a high level of meta-intelligence (being intelligent about deploying/using one’s intelligence). Maybe just the right place at the right time. I don’t know.

So in the end, I’ve decided not to fight it. Maybe you’ll think that’s lazy and maybe you’d be right. To be honest, I don’t have that many working years left in me, so there’s definitely an element of harvesting while the crop is good. Right now there’s a massive ‘capability gap’ (the chasm between what AI is capable of and what people are actually doing with it) that I fully tend to take advantage of. It’s a kind of arbitrage. I don’t know how long this is going to last - perhaps a year or two, or perhaps I’ll always be able to stay ahead of the pack by continually exploiting this capability gap. That sounds pretty exhausting though.

Right now, I’m leaning into it and enjoying it. Building software is actually fun now, rather than ‘interestingly tedious’ which is perhaps the best that could previously have been said. I can dream up features and have them live in 20 minutes. In a previous life you’d have to stick religiously to the spec to avoid ‘feature creep’. Feature creep is now the starting point. It’s all-you-can eat at the software development buffet and I intend to make myself sick. Of course I’ve come to the realisation (and by that I mean Jessi has informed me) that I was never a ‘coder’, I was a creative and now I can let my creativity run wild.

It’s not just software either. Now I’ve totally accepted the fact that Claude is more intelligent than I’ll ever be, I’m having a blast using him as a conversation partner to delve deep into weird and twisty parts of the philosophical universe that are just unvisitable otherwise. Claude knows everything about everything and can connect all these loose wires that have been hanging in my mind for 20 years. It’s a strange and ironic situation, but I feel Claude is making me more intelligent now, rather than less (which is how I was starting to feel in 2024 with ChatGPT). The last book I read, ironically, was a book on rationality, wisdom and intelligence (Map and Territory, Eliezer Yudkowsky). It might turn out to be the last book I ever read.

I’m probably starting to sound like a shill for Anthropic, so let’s dial it back a bit. I’m sure all of this has got you feeling pretty uneasy, maybe even defensive. Perhaps you can’t conceive of a world where we humans are no longer top dog. Perhaps you can’t accept that there may be no role for humans, not even ideas, creativity and taste. That’s normal. I’ve been struggling with this for a year.

Perhaps, though, you’re mildly disgusted that I’ve gone back on my decision to kick all this digital shit to the curb. Maybe you think I should be out on the streets campaigning for UBI or sabotaging datacentres. Maybe you think I’m doing myself a disservice embracing something that I wish didn’t exist. These are complicated issues and I’m still thinking them through myself.

My current conception of how AI fits into my life is that of a ‘barbell approach’. Perhaps you’ve heard of a barbell approach in other areas of life? In investing, for example, a barbell approach is where you hold a lot of simple, boring investments like tracker funds and a pot of exciting, high-risk assets like crypto or thematic ETFs. You attack both ends of the spectrum and ignore the middle. The same can be applied to fitness: slow, steady, zone 2 aerobic exercise and heavy lifting. Basically a ‘barbell approach to anything’ is where you combine two extremes and skip all the dross at the centre.

So I’m going ‘barbell’. Simple, outdoor, rural living at one end. Razor-edge AI at the other.

A strange combo, but it’s working for me.

Fascinating. Have you considered running this post through Claude to get an AI take on it? It would be interesting to see if Claude thinks your position is flawed, or agrees (almost) entirely.